Link to the actual paper: https://www.nature.com/articles/s41586-025-08839-w

The repro and verification will take time. Months or even years. Don’t trust anyone who says it’s definitely real or definitely bunk. Time will tell.

Too bad the US can’t import any of it.

deleted by creator

“These chips are 10,000 times faster, therefore we will increase our tariffs to 10,100%!”

That was yesterday. It doubled since then IIRC

China scientists

So, Chinese scientists?

Chientists

Probably because is an ethnicity and nationality. There are ethnic Chinese people all over the world and a few countries and regions are made of a majority of ethnic Chinese but are not related to China. Calling them the same thing is playing into the PRC’s “all ethnic Chinese pledge their allegiance to China” nonsense.

Isreal like that game of pretend. They believe anti zionist Jews are traitors.

It’s a reasonable assumption that someone in China is Chinese.

The reverse, however, isn’t true. It may be somewhat understandable but not entirely reasonable to assume someone who is Chinese is from China which is what I’m trying to say.

Me stutter? No think so!

Yeah… At best click baity as fuck, at worst a complete scam.

Any time there is a 10x or more in a headline you are 10x or more likely to be right by calling it BS.

You just fucking wait. Trump is bringing manufacturing to the US. And when that plant opens someday you’ll be so sorry you doubted.

Wow, finally graphene has been cracked. Exciting times for portable low-energy computing

It’s like temu. 100x discount.

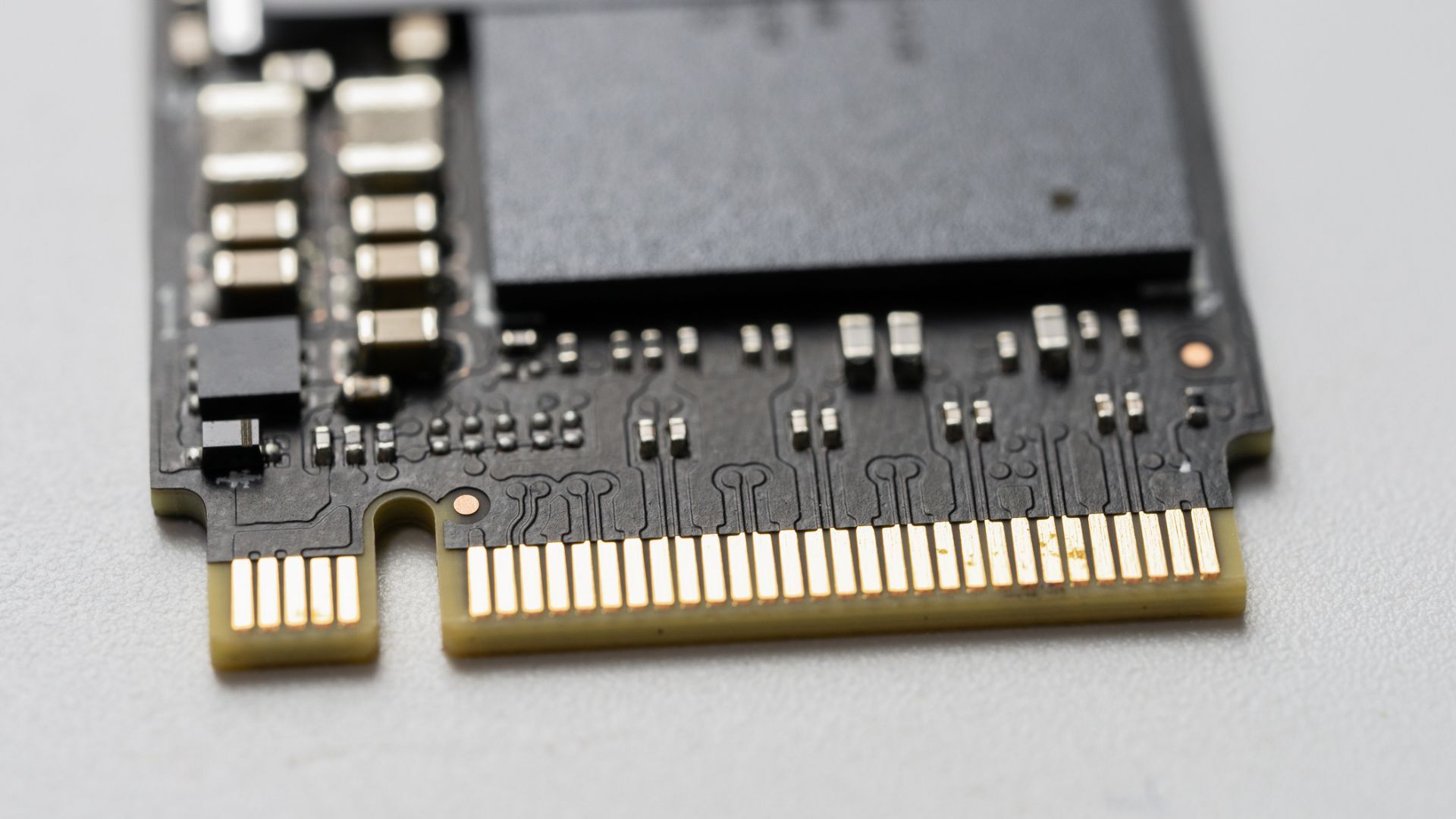

Does flash, like solid state drives, have the same lifespan in terms of write? If so, it feels like this would most certainly not be useful for AI, as that use case would involve doing billions/trillions of writes in a very short span of time.

Edit: It looks like they do: https://www.enterprisestorageforum.com/hardware/life-expectancy-of-a-drive/

Manufacturers say to expect flash drives to last about 10 years based on average use. But life expectancy can be cut short by defects in the manufacturing process, the quality of the materials used, and how the drive connects to the device, leading to wide variations. Depending on the manufacturing quality, flash memory can withstand between 10,000 and a million [program/erase] cycles.

For AI processing, I don’t think it would make much difference if it lasted longer. I could be wrong, but afaik, running the actual transformer for AI is done in VRAM, and staging and preprocessing is done in RAM. Anything else wouldn’t really make sense speed and bandwidth wise.

Oh I agree, but the speeds in the article are much faster than any current volatile memory. So it could theoretically be used to vastly expand memory availability for accelerators/TPUs/etc for their onboard memory.

I guess if they can replicate these speeds in volatile memory and increase the buses to handle it, then they’d be really onto something here for numerous use cases.