I think a substantial part of the problem is the employee turnover rates in the industry. It seems to be just accepted that everyone is going to jump to another company every couple years (usually due to companies not giving adequate raises). This leads to a situation where, consciously or subconsciously, noone really gives a shit about the product. Everyone does their job (and only their job, not a hint of anything extra), but they’re not going to take on major long term projects, because they’re already one foot out the door, looking for the next job. Shitty middle management of course drastically exacerbates the issue.

I think that’s why there’s a lot of open source software that’s better than the corporate stuff. Half the time it’s just one person working on it, but they actually give a shit.

Definitely part of it. The other part is soooo many companies hire shit idiots out of college. Sure, they have a degree, but they’ve barely understood the concept of deep logic for four years in many cases, and virtually zero experience with ANY major framework or library.

Then, dumb management puts them on tasks they’re not qualified for, add on that Agile development means “don’t solve any problem you don’t have to” for some fools, and… the result is the entire industry becomes full of functionally idiots.

It’s the same problem with late-stage capitalism… Executives focus on money over longevity and the economy becomes way more tumultuous. The industry focuses way too hard on “move fast and break things” than making quality, and … here we are, discussing how the industry has become shit.

Shit idiots with enthusiasm could be trained, mentored, molded into assets for the company, by the company.

Ala an apprenticeship structure or something similar, like how you need X years before you’re a journeyman at many hands on trades.

But uh, nope, C suite could order something like that be implemented at any time.

They don’t though.

Because that would make next quarter projections not look as good.

And because that would require actual leadership.

This used to be how things largely worked in the software industry.

But, as with many other industries, now finance runs everything, and they’re trapped in a system of their own making… but its not really trapped, because… they’ll still get a golden parachute no matter what happens, everyone else suffers, so that’s fine.

Exactly. I don’t know why I’m being downvoted for describing the thing we all agree happens…

I don’t blame the students for not being seasoned professionals. I clearly blame the executives that constantly replace seasoned engineers with fresh hires they don’t have to pay as much.

Then everyone surprise pikachu faces when crap is the result… Functionally idiots is absolutely correct for the reality we’re all staring at. I am directly part of this industry, so this is more meant as honest retrospective than baseless namecalling. What happens these days is idiotry.

Yep, literal, functional idiots, as in, they keep doing easily provably as stupid things, mainly because they are too stubborn to admit they could be wrong about anything.

I used to be part of this industry, and I bailed, because the ratio of higher ups that I encountered anywhere, who were competent at their jobs vs arrogant lying assholes was about 1:9.

Corpo tech culture is fucked.

Makes me wanna chip in a little with a Johnny Silverhand solo.

Fuck man, why don’t more ethical-ish devs join to make stuff? What’s the missing link on top of easy sharing like FOSS kinda’ already has?

Obviously programming is a bit niche, but fuck… how can ethical programmers come together to survive under capitalism? Sure, profit sharing and coops aren’t bad, but something of a cultural nexus is missing in this space it feels…

Well, I’m not quite sure how to … intentionally create a cultural nexus … but I would say that having something like lemmy, piefed, the fediverse, is at least a good start.

Socializing, discussion, via a non corpo platform.

Beyond that, uh, maybe something more lile an actual syndicalist collective, or at least a union?

Yeah, a union would be great, although I feel like that would be something that would have to come quite a ways down the road of ethical devs coming together. After all, not even the FOSS community agrees on what is ethical to give away and to whom.

Maybe a union is still the right term for the abstract ‘coming together’ I’m thinking of, since it’s hard to imagine how they could go from a generic collective to a body that could actually make effective demands, but perhaps it’s roughly the same process as getting a job-wide union off the ground.

My hot take : lots of projects would benefit from a traditional project management cycle instead of trying to force Agile on every projects.

Agile SHOULD have a lot of the things ‘traditional’ management looks for! Though so many, including many college teachers I’ve heard, think of it way too strictly.

It’s just the time scale shrinks as necessary for specific deliverable goals instead of the whole product… instead of having a design for the whole thing from top to bottom, you start with a good overview and implement general arch to service what load you’ll need. Then you break down the tasks, and solve the problems more and more and yadda yadda…

IMO, the people that think Agile Development means only implement the bare minimum … are part of the complete fucking idiot portion of the industry.

Funny how agile seems to mean different things to different people.

Agile was the cool new thing years back and has been abused and misused and now, pretty much every dev company force it on their team but do whatever the fuck they want.

Agile should have a lot of traditional project management but doesn’t because it became the MBA wet dream of metrics. And when metrics become the target, people will do whatever they need to do to meet the metrics instead of actually progressing the project.

Yep! Funny how so many good ideas get ruined by the people that think they deserve the biggest pay checks…

That’s “disrupting the industry” or “revolutionizing the way we do things” these days. The “move fast and break things” slogan has too much of a stink to it now.

Probably because all the dummies are finally realizing it’s a fucking stupid slogan that’s constantly being misinterpreted from what it’s supposed to mean. lol (as if the dummies even realize it has a more logical interpretation…)

Now if only they would complete the maturation process and realize all of the tech bro bullshit runs counter to good engineering or business…

True, but this is a reaction to companies discarding their employees at the drop of a hat, and only for “increasing YoY profit”.

It is a defense mechanism that has now become cultural in a huge amount of countries.

It seems to be just accepted that everyone is going to jump to another company every couple years (usually due to companies not giving adequate raises).

Well. I did the last jump because the quality was so bad.

I’ve been working at a small company where I own a lot of the code base.

I got my boss to accept slower initial work that was more systemically designed, and now I can complete projects that would have taken weeks in a few days.

The level of consistency and quality you get by building a proper foundation and doing things right has an insane payoff. And users notice too when they’re using products that work consistently and with low resources.

This is one of the things that frustrates me about my current boss. He keeps talking about some future project that uses a new codebase we’re currently writing, at which point we’ll “clean it up and see what works and what doesn’t.” Meanwhile, he complains about my code and how it’s “too Pythonic,” what with my docstrings, functions for code reuse, and type hints.

So I secretly maintain a second codebase with better documentation and optimization.

How can your code be too pythonic?

Also type hints are the shit. Nothing better than hitting shift tab and getting completions and documentation.

Even if you’re planning to migrate to a hypothetical new code base, getting a bunch of documented modules for free is a huge time saver.

Also migrations fucking suck, you’re an idiot if you think that will solve your problems.

(I write only internal tools and I’m a team of one. We have a whole department of people working on public and customer focused stuff.)

My boss let me spend three months with absolutely no changes to functionality or UI, just to build a better, more configurable back end with a brand new config UI, partly due to necessity (a server constraint changed), otherwise I don’t think it would have ever got off the ground as a project. No changes to master for three months, which was absolutely unheard of.

At times it was a bit demoralising to do so much work for so long with nothing to show for it, but I knew the new back end would bring useful extras and faster, robust changes.

The backend config ui is still in its infancy, but my boss is sooo pleased with its effect. He is used to a turnaround for simple changes of between 1 and 10 days for the last few years (the lifetime of the project), but now he’s getting used to a reply saying I’ve pushed to live between 1 and 10 minutes.

Brand new features still take time, but now that we really understand what it needs to do after the first few years, it was enormously helpful to structure the whole thing to be much more organised around real world demands and make it considerably more automatic.

Feels food. Feels really good.

That’s awesome. Your manager had some rare foresight in that case.

He’s a great boss. He really is.

I had goodwill stored up because like me, he uses the tool to several times a day, he really likes it because it makes some tasks far easier (v0.1) and I added loads of extras over the years, and it was me that dreamed it up in the first place.

The new server constraint affected me on the daily but wasn’t going to affect him at all for most of those three months, and even then, not often and there was a workaround for his usage, but he trusted me and he wants my end to be as convenient as his is (very fair minded guy indeed).

I would go a long long way for him. I went to his wedding in 2023 and we sometimes have drinks after work. He knows how it is, has been there, done that and got the T shirt and isn’t afraid to tell truth to power:

You know you like to have X? We’re gonna need Y…

Remember the prioritisation of Y you were going to do?..

Yeah, so no, sorry, we don’t quite have X, partly because of this and that mistake we made, but also we weren’t able to get very close to X because we never got Y.

Genuinely, cue recommitment of senior management to Y in the next quarter! It might not happen, but no shouting, no blaming, and rationality all round.

I don’t think they like it at all when he says stuff like that, but they love that the crises pretty much dwindled out when they put him in charge and as he gradually recruited more people who put more effort into making things better than shouting and blaming, and as the shouters and blamers left to find employment elsewhere where shouting and blaming was effective. It simply does not work on my boss even a little bit, and he simply never does it. Customers now praise his department instead of complain about it, so he gets a lot of leeway from management to do things his way.

Brilliant. It’s so valuable to have a manager that actually treats you like a professional in these situations. Sounds like a diamond in the rough alright.

Some agency when working goes a long way to fostering a really good working relationship. I’m still a lot earlier in my career, so generally in my first non-internship role I was expecting to be given little bits of work like change this button, widen this form, that kind of stuff.

Turns out I’d joined one of those “sink or swim” smaller companies where you have to wear a lot of hats. Initially I thought quite negatively about it but once I started to gain some confidence I realised he was giving me the time and space to properly learn stuff and develop it until it was “good”. He, thankfully, still shoots down my sillier ideas but if I have a good one he throws his full support behind me.

Currently he was like, I need you to investigate how to set up automated fraud prevention checks and flag, let’s say things, for clients to investigate further, and he sent me off for a week to analyse the problem, speak to everyone involved and gather a list of data points and how to calculate them. Then he gave me the time to design the system, including the mental room to develop our first shared lib after .net framework.

Really I’m rambling a bit, but my point is, you can get a lot of good work out of people if you invest in them and allow them some agency. Maybe some can’t work well without constant pressure, but I think a lot of people thrive when supported and enabled correctly by management.

Yeah. Some people love to micro manage and play power games, but it’s so refreshing to work for someone who has the confidence to just concentrate on doing a great job.

Software has a serious “one more lane will fix traffic” problem.

Don’t give programmers better hardware or else they will write worse software. End of.

This is very true. You don’t need a bigger database server, you need an index on that table you query all the time that’s doing full table scans.

You never worked on old code. It’s never that simple in practice when you have to make changes to existing code without breaking or rewriting everything.

Sometimes the client wants a new feature that cannot easily implement and has to do a lot of different DB lookups that you can not do in a single query. Sometimes your controller loops over 10000 DB records, and you call a function 3 levels down that suddenly must spawn a new DB query each time it’s called, but you cannot change the parent DB query.

but you cannot change the parent DB query.

Why not?

This sounds like the “don’t touch working code” nonsense I hear from junior devs and contracted teams. They’re so worried about creating bugs that they don’t fix larger issues and more and more code gets enshrined as “untouchable.” IMO, the older and less understood logic is, the more it needs to be touched so we can expose the bugs.

Here’s what should happen, depending on when you find it:

- grooming/research phase - increase estimates enough to fix it

- development phase - ask senior dev for priority; most likely, you work around for now, but schedule a fix once feature compete; if it’s significant enough, timelines may be adjusted

- testing phase/hotfix - same as dev, but much more likely to put it off

Teams should have a budget for tech debt, and seniors can adjust what tech debt they pick.

In general though, if you’re afraid to touch something, you should touch it, but only if you budget time for it.

That budget is the key. You have to demonstrate/convince the purse holders first. This isn’t always an easy task.

Fair. If that’s not possible, I’ll start looking for another job, because I don’t want to deal with a time bomb that will suddenly explode and force me to come in on a holiday of something to fix it. My current company allocates 10-20% of dev time to tech debt, because we all know it’ll happen, so we budget for it.

“Don’t touch working code” stems from “last person who touched it, owns it” and there’s some shit that it’s just not worth your pay grade to own.

Particularly if you’re a contractor employed to work on something specific

I get that for contractors, get in and get out is the best strategy.

If you’re salary, you own it regardless, so you might as well know what it does.

Where is this even coming from? The guy above me is saying not to give devs better hardware and to teach them to code better.

I followed up with an example of how using indices in a database to boost the performance helped more than throwing more hardware at it.

This has nothing to do with having worked on old code. Stop trying to pull my comment out of context.

But yes you’re right. Adding indexes to a database does nothing to solve adding a new feature in the scenario you described. I also never claimed it did.

That’s why it needs to be written better in the first place

Tell me you never worked on legacy code without telling me…

Kid. Go away

You do accept that bad software has been written, yes? and that some of that software is performing important functions? So how is saying “It needs to be written better in the first place” of any use at all when discussing legacy software?

It’s not, but you’ll still hear it a lot. Funny, no one can agree on what “better” means, especially not the first person who wrote it, who had unclear requirements, too little time, and 3 other big tickets looming. All of these problems descend from management, they don’t always spontaneously come into being because of “bad devs”, although sometimes they do.

Or sharding on a particular column

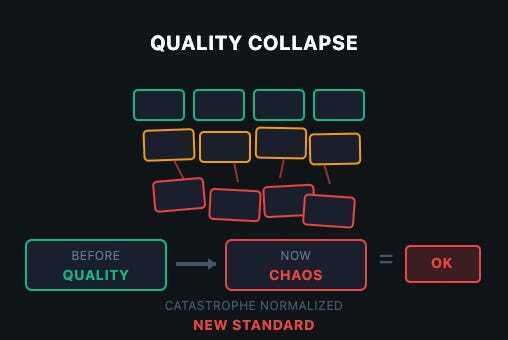

The article is very much off point.

- Software quality wasn’t great in 2018 and then suddenly declined. Software quality has been as shit as legally possible since the dawn of (programming) time.

- The software crisis has never ended. It has only been increasing in severity.

- Ever since we have been trying to squeeze more programming performance out of software developers at the cost of performance.

The main issue is the software crisis: Hardware performance follows moore’s law, developer performance is mostly constant.

If the memory of your computer is counted in bytes without a SI-prefix and your CPU has maybe a dozen or two instructions, then it’s possible for a single human being to comprehend everything the computer is doing and to program it very close to optimally.

The same is not possible if your computer has subsystems upon subsystems and even the keyboard controller has more power and complexity than the whole apollo programs combined.

So to program exponentially more complex systems we would need exponentially more software developer budget. But since it’s really hard to scale software developers exponentially, we’ve been trying to use abstraction layers to hide complexity, to share and re-use work (no need for everyone to re-invent the templating engine) and to have clear boundries that allow for better cooperation.

That was the case way before electron already. Compiled languages started the trend, languages like Java or C# deepened it, and using modern middleware and frameworks just increased it.

OOP complains about the chain “React → Electron → Chromium → Docker → Kubernetes → VM → managed DB → API gateways”. But he doesn’t even consider that even if you run “straight on bare metal” there’s a whole stack of abstractions in between your code and the execution. Every major component inside a PC nowadays runs its own separate dedicated OS that neither the end user nor the developer of ordinary software ever sees.

But the main issue always reverts back to the software crisis. If we had infinite developer resources we could write optimal software. But we don’t so we can’t and thus we put in abstraction layers to improve ease of use for the developers, because otherwise we would never ship anything.

If you want to complain, complain to the mangers who don’t allocate enough resources and to the investors who don’t want to dump millions into the development of simple programs. And to the customers who aren’t ok with simple things but who want modern cutting edge everything in their programs.

In the end it’s sadly really the case: Memory and performance gets cheaper in an exponential fashion, while developers are still mere humans and their performance stays largely constant.

So which of these two values SHOULD we optimize for?

The real problem in regards to software quality is not abstraction layers but “business agile” (as in “business doesn’t need to make any long term plans but can cancel or change anything at any time”) and lack of QA budget.

we would need exponentially more software developer budget.

Are you crazy? Profit goes to shareholders, not to invest in the project. Get real.

The software crysis has never ended

MAXIMUM ARMOR

Shit, my GPU is about to melt!

I agree with the general idea of the article, but there are a few wild takes that kind of discredit it, in my opinion.

“Imagine the calculator app leaking 32GB of RAM, more than older computers had in total” - well yes, the memory leak went on to waste 100% of the machine’s RAM. You can’t leak 32GB of RAM on a 512MB machine. Correct, but hardly mind-bending.

“But VSCodium is even worse, leaking 96GB of RAM” - again, 100% of available RAM. This starts to look like a bad faith effort to throw big numbers around.

“Also this AI ‘panicked’, ‘lied’ and later ‘admitted it had a catastrophic failure’” - no it fucking didn’t, it’s a text prediction model, it cannot panic, lie or admit something, it just tells you what you statistically most want to hear. It’s not like the language model, if left alone, would have sent an email a week later to say it was really sorry for this mistake it made and felt like it had to own it.You can’t leak 32GB of RAM on a 512MB machine.

32gb swap file or crash. Fair enough point that you want to restart computer anyway even if you have 128gb+ ram. But calculator taking 2 years off of your SSD’s life is not the best.

It’s a bug and of course it needs to be fixed. But the point was that a memory leak leaks memory until it’s out of memory or the process is killed. So saying “It leaked 32GB of memory” is pointless.

It’s like claiming that a puncture on a road bike is especially bad because it leaks 8 bar of pressure instead of the 3 bar of pressure a leak on a mountain bike might leak, when in fact both punctures just leak all the pressure in the tire and in the end you have a bike you can’t use until you fixed the puncture.

Yeah, that’s quite on point. Memory leaks until something throws an out of memory error and crashes.

What makes this really seam like a bad faith argument instead of a simple misunderstanding is this line:

Not used. Not allocated. Leaked.

OOP seems to understand (or at least claims to understand) the difference between allocating (and wasting) memory on purpose and a leak that just fills up all available memory.

So what does he want to say?

Yeah what I hate that agile way of dealing with things. Business wants prototypes ASAP but if one is actually deemed useful, you have no budget to productisize it which means that if you don’t want to take all the blame for a crappy app, you have to invest heavily in all of the prototypes. Prototypes who are called next gen project, but gets cancelled nine times out of ten 🤷🏻♀️. Make it make sense.

This. Prototypes should never be taken as the basis of a product, that’s why you make them. To make mistakes in a cheap, discardible format, so that you don’t make these mistake when making the actual product. I can’t remember a single time though that this was what actually happened.

They just label the prototype an MVP and suddenly it’s the basis of a new 20 year run time project.

In my current job, they keep switching around everything all the time. Got a new product, super urgent, super high-profile, highest priority, crunch time to get it out in time, and two weeks before launch it gets cancelled without further information. Because we are agile.

THANK YOU.

I migrated services from LXC to kubernetes. One of these services has been exhibiting concerning memory footprint issues. Everyone immediately went “REEEEEEEE KUBERNETES BAD EVERYTHING WAS FINE BEFORE WHAT IS ALL THIS ABSTRACTION >:(((((”.

I just spent three months doing optimization work. For memory/resource leaks in that old C codebase. Kubernetes didn’t have fuck-all to do with any of those (which is obvious to literally anyone who has any clue how containerization works under the hood). The codebase just had very old-fashioned manual memory management leaks as well as a weird interaction between jemalloc and RHEL’s default kernel settings.

The only reason I spent all that time optimizing and we aren’t just throwing more RAM at the problem? Due to incredible levels of incompetence business-side I’ll spare you the details of, our 30 day growth predictions have error bars so many orders of magnitude wide that we are stuck in a stupid loop of “won’t order hardware we probably won’t need but if we do get a best-case user influx the lead time on new hardware is too long to get you the RAM we need”. Basically the virtual price of RAM is super high because the suits keep pinky-promising that we’ll get a bunch of users soon but are also constantly wrong about that.

All of the examples are commercial products. The author doesn’t know or doesn’t realize that this is a capitalist problem. Of course, there is bloat in some open source projects. But nothing like what is described in those examples.

And I don’t think you can avoid that if you’re a capitalist. You make money by adding features that maybe nobody wants. And you need to keep doing something new. Maintenance doesn’t make you any money.

So this looks like AI plus capitalism.

Sometimes, I feel like writers know that it’s capitalism, but they don’t want to actually call the problem what it is, for fear of scaring off people who would react badly to it. I think there’s probably a place for this kind of oblique rhetoric, but I agree with you that progress is unlikely if we continue pussyfooting around the problem

But the tooling gets bloatier too, even if it does the same. Extrem example Android apps.

You make money by adding features that maybe nobody wants

So, um, who buys them?

A midlevel director who doesn’t use the tool but thinks all the features the salesperson mentioned seem cool

Stockholders

Stockholders want the products they own stock in to have AI features so they won’t be ‘left behind’

Sponsors maybe? Adding features because somebody influential wants them to be there. Either for money (like shovelware) or soft power (strengthening ongoing business partnerships)

Capitalism’s biggest lie is that people have freedom to chose what to buy. They have to buy what the ruling class sells them. When every billionaire is obsessed with chatbots, every app has a chatbot attached, and if you don’t want a chatbot, sucks to be you then, you have to pay for it anyway.

It’s just about convincing investors that you’re going places. Customers don’t have to want your new features or buy more of your stuff because it has them. Users certainly don’t have to want or use them. Just do buzzword-driven development and keep the investors convinced that you’re the future.

“Open source” is not contradictory to “capitalist”, just involves a fair bit of industry alliances and\or freeloading.

“Open source” was literally invented to make Free software palatable to capitol.

It absolutely is to the majority of capitalists unless it still somehow directly benefits them monetarily

Accept that quality matters more than velocity. Ship slower, ship working. The cost of fixing production disasters dwarfs the cost of proper development.

This has been a struggle my entire career. Sometimes, the company listens. Sometimes they don’t. It’s a worthwhile fight but it is a systemic problem caused by management and short-term profit-seeking over healthy business growth

“Apparently there’s never the money to do it right, but somehow there’s always the money to do it twice.”

Management never likes to have this brought to their attention, especially in a Told You So tone of voice. One thinks if this bothered pointy-haired types so much, maybe they could learn from their mistakes once in a while.

We’ll just set up another retrospective meeting and have a lessons learned.

Then we won’t change anything based off the findings of the retro and lessons learned.

Post-mortems always seemed like a waste of time to me, because nobody ever went back and read that particular confluence page (especially me executives who made the same mistake again)

Post mortems are for, “Remember when we saw something similar before? What happened and how did we handle it?”

They should also include the recommended steps to take to prevent the issue again, which would allow you to investigate why the changes you made (you were allowed to make the changes, right? Manglement let you make the changes?) didn’t prevent the issue

Twice? Shiiiii

Amateur numbers, lol

That applies in so many industries 😅 like you want it done right… Or do you want it done now? Now will cost you 10x long term though…

Welp now it is I guess.

You can have it fast, you can have it cheap, or you can have it good (high quality), but you can only pick two.

Getting 2 is generous sometimes.

There’s levels to it. True quality isn’t worth it, absolute garbage costs a lot though. Some level that mostly works is the sweet spot.

The sad thing is that velocity pays the bills. Quality it seems, doesn’t matter a shit, and when it does, you can just patch up the bits people noticed.

This is survivorship bias. There’s probably uncountable shitty software that never got adopted. Hell, the E.T. video game was famous for it.

I don’t make games, but fine. Baldurs Gate 3 (PS5 co-op) and Skyrim (Xbox 360) had more crashes than any games I’ve ever played.

Did that stop either of them being highly rated top selling games? No. Did it stop me enjoying them? No.

Quality feels important, but past a certain point, it really isn’t. Luck, knowing the market, maneuverability. This will get you most of the way there. Look at Fortnite. It was a wonky building game they quickly cobbled into a PUBG clone.

I love crappy slapped together indi games. Headliners and Peak come to mind. Both have tons of bugs but the quality is there where it matters. Peak has a very unique health bar system I love, and Headliners is constantly working on the balance and fun, not on the graphics or collision bugs. Both of those groups had very limited resources and they spent them where they matter, in high quality mechanics that are fun to play.

Skyrim is old enough to drive a car now, but back then it’s main mechanic was the open world hugeness. They made damn sure to cram that world full of tons of stuff to do, and so for the most part people forgave bugs that didn’t detract from that core experience.

BG3 was basically perfect. I remember some bugs early on but that’s a very high quality game. If you’re expecting every game you play to live up to that bar, you’re going to be very disappointed.

Quality does matter, but it only matters when it’s core to the experience. No one is going to care if your first-person-shooter with tons of lag and shitty controls has an amazing interactive menu and beautiful animations.

It’s not the amount of quality, it’s where you apply it.

(I’ve had that robot game that came with th ps5 crash on me, but folding@home on the ps2 never did, imagine that)

Quality in this economy ? We need to fire some people to cut costs and use telemetry to make sure everyone that’s left uses AI to pay AI companies because our investors demand it because they invested all their money in AI and they see no return.

Fabricated 4,000 fake user profiles to cover up the deletion

This has got to be a reinforcement learning issue, I had this happen the other day.

I asked Claude to fix some tests, so it fixed the tests by commenting out the failures. I guess that’s a way of fixing them that nobody would ever ask for.

Absolutely moronic. These tools do this regularly. It’s how they pass benchmarks.

Also you can’t ask them why they did something, they have no capacity of introspection, they can’t read their input tokens, they just make up something that sounds plausible for “what were you thinking”.

Also you can’t ask them why they did something, they have no capacity of introspection, (…) they just make up something that sounds plausible for “what were you thinking”.

It’s uncanny how it keeps becoming more human-like.

No. No it doesn’t, ALL human-like behavior stems from its training data … that comes from humans.

The model we have at work tries to work around this by including some checks. I assume they get farmed out to specialised models and receive the output of the first stage as input.

Maybe it catches some stuff? It’s better than pretend reasoning but it’s very verbose so the stuff that I’ve experimented with - which should be simple and quick - ends up being more time consuming than it should be.

I’ve been thinking of having a small model like a long context qwen 4b run and do quick code review to check for these issues, then just correct the main model.

It feels like a secondary model that only exists to validate that a task was actually completed could work.

Yeah, it can work, because it’ll trigger the recall of different types of input data. But it’s not magic and if you have a 25% chance of the model you’re using hallucinating, you probably end up still with an 8.5% chance of getting bullshit after doing this.

Another big problem not mentioned in the article is companies refusing to hire QA engineers to do actual testing before releasing.

The last two American companies I worked for had fired all the QA engineers or refused to hire any. Engineers were supposed to “own” their features and test them themselves before release. It’s obvious that this can’t provide the same level of testing and the software gets released full of bugs and only the happy path works.

“AI just weaponized existing incompetence.”

Daamn. Harsh but hard to argue with.

Weaponized? Probably not. Amplified? ABSOLUTELY!

It’s like taping a knife to a crab. Redundant and clumsy, yet strangely intimidating

Love that video. Although it wasn’t taped on. The crab was full on about to stab a mofo

Yeah, crabby boi fully had stabbin’ on his mind.

I’m glad that they added CloudStrike into that article, because it adds a whole extra level of incompetency in the software field. CS as a whole should have never happens in the first place if Microsoft properly enforced their stance they claim they had regarding driver security and the kernel.

The entire reason CS was able to create that systematic failure was because they were(still are?) abusing the system MS has in place to be able to sign kernel level drivers. The process dodges MS review for the driver by using a standalone driver that then live patches instead of requiring every update to be reviewed and certified. This type of system allowed for a live update that directly modified the kernel via the already certified driver. Remote injection of un-certified code should never have been allowed to be injected into a secure location in the first place. It was a failure on every level for both MS and CS.

Anyone else remember a few years ago when companies got rid of all their QA people because something something functional testing? Yeah.

The uncontrolled growth in abstractions is also very real and very damaging, and now that companies are addicted to the pace of feature delivery this whole slipshod situation has made normal they can’t give it up.

I must have missed that one

That was M$, not an industry thing.

It was not just MS. There were those who followed that lead and announced that it was an industry thing.

I wonder if this ties into our general disposability culture (throwing things away instead of repairing, etc)

That and also man hour costs versus hardware costs. It’s often cheaper to buy some extra ram than it is to pay someone to make the code more efficient.

Sheeeit… we haven’t been prioritizing efficiency, much less quality, for decades. You’re so right and þrowing hardware at problems. Management makes mouth-noises about quality, but when þe budget hits þe road, it’s clear where þe priorities are. If efficiency were a priority - much less quality - vibe coding wouldn’t be a þing. Low-code/no-code wouldn’t be a þing. People building applications on SAP or Salesforce wouldn’t be a þing.

Yes, if you factor in the source of disposable culture: capitalism.

“Move fast and break things” is the software equivalent of focusing solely on quarterly profits.

Planned Obsolescence … designing things for a short lifespan so that things always break and people are always forced to buy the next thing.

It all originated with light bulbs 100 years ago … inventors did design incandescent light bulbs that could last for years but then the company owners realized it wasn’t economically feasible to produce a light bulb that could last ten years because too few people would buy light bulbs. So they conspired to engineer a light bulb with a limited life that would last long enough to please people but short enough to keep them buying light bulbs often enough.

Not the light bulbs. They improved light quality and reduced energy consumption per unit of light by increasing filament temperature, which reduced bulb life. Net win for the consumer.

You can still make an incandescent bulb last long by undervolting it orange, but it’ll be bad at illuminating, and it’ll consume almost as much electricity as when glowing yellowish white (standard).

Edison was DEFINITELY not unique or new in how he was a shithead looking for money more than inventing useful things… Like, at all.

Non-technical hiring managers are a bane for developers (and probably bad for any company). Just saying.